Contra Labs just published the Human Creativity Benchmark REPORT

Here’s what their data actually means for your marketing team…

The short version: Contra Labs is a freelance platform with over 1.5 million creatives. They ran a study to find out how real design professionals judge AI creative work. They tested five creative areas: landing pages, desktop apps, ad images, brand images, and product videos. Their data has obvious implications for creative contractors, but I think there’s some major takeaways for in-house marketing teams as well…

(Read the full report here.)

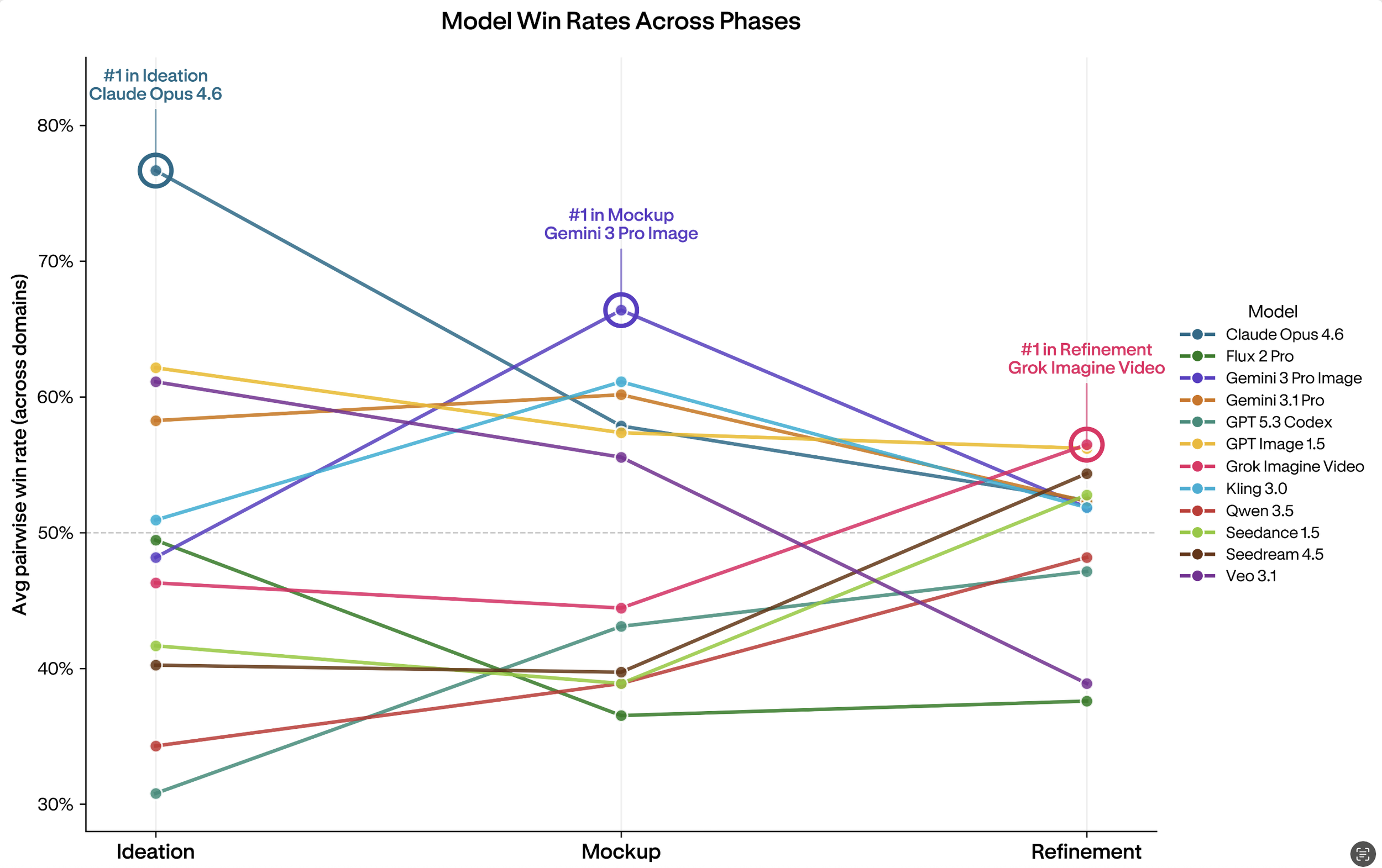

Contra tested AI across three stages of work:

Coming up with ideas

Building mockups

Refining final work

Then, they collected about 15,000 individual judgments. Their big finding?

"Is this AI output good?" is the wrong question.

The right question is: WHO is this AI Output good for, at WHAT stage, and for WHAT reason?

Remember: Creative work gets judged two different ways.

The first is stuff everyone agrees on, like "this text is impossible to read" or "the button is broken."

The second is stuff people disagree on, because personal taste is real.

Most AI benchmarks mash these two things together into one score. That is like averaging "did the car start?" with "do you like the color?"

It gives you a number, but the number means nothing usefull.

Here is the twist nobody wants to admit (yet): Not one AI model led all three stages in any creative area.

Not one.

Claude was the best at coming up with ideas.

Gemini was the best at the mockup stage.

Grok and Seedream rose to the top by the refinement stage.

The model that is best at generating ideas is often bad at following through on them. That is not a flaw. It is just the truth about what these tools are built to do.

What This Means for In-House Creative Teams

If your team is using one AI tool for every stage of a creative project, the data says you might be making a mistake.

This study shows clearly that AI models have stages where they do well and stages where they fall apart.

Claude Opus makes great early ideas. The work feels interesting and put-together.

But ask it to do small, targeted edits near the end? It loses ground fast.

Gemini Pro is the opposite. It is great at locking in a design system with the right colors, fonts, and layout.

But try to push it in a different direction and it pushes back.

Grok Imagine Video started in last place and finished first in video refinement. Crazy huh?

What this means for your teams: We have to stop thinking about AI tools as a "pick one forever" decision.

Think about which tool fits which stage.

The tools you use for early ideas probably should not be the same tools you use for final polish.

At Forrest, we’re doing exactly this, and it’s saving us time on some projects, and forcing us to learn a better AI workflow for others where we might be using the wrong tool for stuff.

Everyone is still learning.

If you are building AI-assisted creative workflows, the design challenge is not which model is "best." It is getting the right model in front of your team at the right moment, without making them figure that out on their own.

There is also a finding that will be uncomfortable for anyone selling AI creative tools on the idea that you can just give it a brief and walk away.

The most creative models in the idea stage are often the worst at following a specific brief.

Claude in ideation is inspired, but will go off script.

Gemini for the mockup stage stays on brief but will not go creative.

etc…

What This Means for Creative Professionals

You already knew something felt off. The AI output looks fine and you still hate it, but you could not explain why. This research gives you the words for it.

Here is what’s actually happening: Once AI output clears a basic quality bar, like readable text and correct layout, evaluators stop judging on quality and start judging on taste. And something everyone has been talking about is how taste is genuinely personal because it’s INNATELY human.

The study measured this directly.

Visual appeal caused way more disagreement between evaluators than prompt adherence did. That disagreement is not a mistake.

It’s someone being a professional with a REAL point of view.

The practical stuff: usability is the first filter.

In ad design, outputs with the lowest usability scores finished in the top two rankings only 10% of the time. Outputs with the highest usability scores finished in the top two rankings 84% of the time. No amount of beautiful visual work saves a layout that does not work. The evaluators in this study, without talking to each other, followed the same order: Does it work? Does it follow the brief? Does it look good? In that order.

(Hint: If you are using AI for client work at scale like my team is, that is your checklist.)

Another thing worth knowing: the stage you are in COMPLEEEEETELY changes what matters.

In the idea stage, big structure and overall feel are what people focus on.

In the mockup stage, it shifts to design system stuff: did the colors stay consistent, does the layout hold together.

By the refinement stage, evaluators were mostly just talking about typography, button clarity, and spacing. If you are prompting AI tools without thinking about where you are in that process, you are using the wrong tool for the job.

Most creative professionals are not frustrated because AI makes bad work.

They are frustrated because AI makes the same work over and over. This study has a name for that: “mode collapse.”

This is because even if you tell AI to be risky and bold, it will still give you something safe.

Think about it, when you give multiple models the same brief, they all drift toward safe, middle-of-the-road choices. Ever notice it?

Your job as a creative is to NOT ONLY fight against SAFE like a divorced UFC middleweight trying to win back custody of his kids, but to also know which models to use at which stages, and build your workflow so your creative direction stays in-charge.

My final take:

No AI model was the best at everything, in any category, even once.

Not ONE.

These companies are spending billions of dollars and the best any of them could do was win one phase of one project type.

You and I will get the most value out of these tools (and ironically so will clients) when we learn to use the right tools in the right settings, for our unique creative outputs and offerings!

For the love of humanity,

Your Chief Creative Officer,

BT